With this MCP configuration file you will be capable to use Smart Data Models locally in your AI agent.

{

"mcpServers": {

"smartdatamodels": {

"type": "http",

"serverUrl": "https://opendatamodels.org/mcp/v1"

}

}

}With this MCP configuration file you will be capable to use Smart Data Models locally in your AI agent.

{

"mcpServers": {

"smartdatamodels": {

"type": "http",

"serverUrl": "https://opendatamodels.org/mcp/v1"

}

}

}Are you building AI applications for Smart Cities, Energy, or IoT? Interoperability is often the biggest hurdle—but a new tool is making it easier to keep your LLMs “in the loop” with global standards.

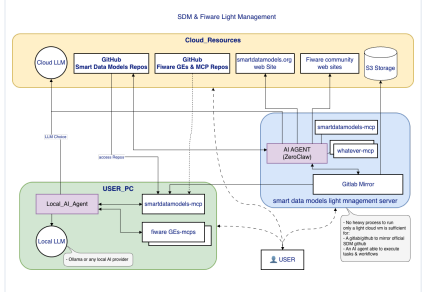

Introducing the Smart Data Models MCP Server, a new reference implementation that connects Large Language Models directly to the Smart Data Models ecosystem. Built on the Model Context Protocol (MCP), this server allows AI agents to browse, retrieve, and implement standardized data schemas in real-time.

Key Features:

Instant Schema Access: Give your LLM the ability to look up official data models for everything from street lighting to soil sensors.

Enhanced Accuracy: Reduce hallucinations by providing the AI with the exact JSON-LD and NGSI-LD structures required for your project.

Seamless Integration: Designed for easy setup with MCP-compatible clients (like Claude Desktop), enabling a smoother developer workflow for digital twin and IoT projects.

By providing LLMs with a “dictionary” of standardized data, the Smart Data Models MCP Server ensures that your AI-driven solutions are born interoperable.

Check it out on GitHub: agaldemas/smartdatamodels-mcp

In these slides are the detailed explanation of this that can be reached here. See below the architecture and in the README the easy configuration.

Here there is the configuration file (there are several options)

{

"mcpServers": {

"smart-data-models-http": {

"autoApprove": [],

"disabled": false,

"type": "streamableHttp",

"timeout": 180,

"url": "http://127.0.0.1:3210/mcp"

}

}

}

The source code is available here

Thanks to Alain Galdemas for the contribution

We are thrilled to announce a significant new release of our Python package, pysmartdatamodels, designed to empower developers and streamline the contribution process for our community.

This update is packed with new data models till 6-3-26.

Yo do not need to update the package if you use the function it will update the information about the new datamodels published:

from pysmartdatamodels import pysmartdatamodels as sdm

sdm.update_data()

It will take several minutes (depending on your connection because it updates 140 Mb)

The tool automates the generation of Smart Data Models (SDM) from visual models, bridging the gap between high-level domain design and technical implementation for Digital Twins and IoT ecosystems.

Input: Users define domain entities and relationships using B-UML (a simplified UML dialect) within the BESSER Pearl editor.

Transformation: The engine maps these models to the NGSI-LD standard and Schema.org vocabularies.

Output: For every entity, it automatically generates a compliant folder containing:

schema.json: The technical JSON Schema definition.

Examples: Multi-format payloads including JSON-LD, NGSI-v2, and Normalized NGSI-LD.

Documentation: Automatically derived human-readable specifications.

Interoperability: Ensures 100% compliance with ETSI NGSI-LD and SDM contribution guidelines.

Model-Driven Engineering (MDE): Moves the “source of truth” to a visual model, reducing manual coding errors in complex JSON-LD structures.

Efficiency: Accelerates the deployment of standardized data spaces by automating the boilerplate required for context brokers (e.g., Orion).

Calling all SDM contributors!

To ensure a smooth integration process, we want to remind everyone that all contributions undergo automated testing prior to submission. To help you streamline your workflow and catch potential issues early, we’ve made our testing suite available for local use.

You can access the full source code for our testing framework under an open-source license. Running these tests on your own machine not only boosts the quality of your code but also saves valuable time for both you and the maintainers.

Download the Source: Head over to our repository to grab the testing suite.

Configure: Follow the simple instructions in the README. (Quick tip: You only need to update config.json with your specific local directories).

Run Anywhere: The suite is flexible—it supports testing against both remote repositories and local file systems.

By validating your work locally before you push, you’re helping us maintain a high standard for the SDM project.

Happy coding!

List of tests

A new data model, TourismPresenceObserved in the subject dataModel.TourismDestinations

Thanks to the company ubiwhere.com for the contribution.

A new data model, TourismDwellTimeObserved in the subject dataModel.TourismDestinations

Thanks to the company ubiwhere.com for the contribution.

The contributors of new data models can test their data models in their local repositories with the source code of the testing tool than also can use online

Home -> tools -> test your data model

it has been updated to deal with those attributes coming from languageMap properties in NGSI

We are thrilled to announce a significant new release of our Python package, pysmartdatamodels, designed to empower developers and streamline the contribution process for our community.

This update is packed with new data models and, most notably, powerful new validation and testing services.

The heart of the Smart Data Models initiative is our comprehensive library of open-licensed data models. With this new release, we have expanded it further, adding new models across our thirteen domains, from Smart Cities to Smart Energy. This package continues to provide powerful functions to integrate more than 1,000 standardized data models into your projects, digital twins, and data spaces.

To improve the quality and speed of contributions, we are excited to launch a brand new service for our contributors. You can now automatically test your data models before submitting them. This automated validation ensures that new models comply with our standards, making the review and integration process smoother for everyone.

For those who want to integrate this validation into their own workflows, the source code for the testing tool is also available.

In addition to the new contributor tool, you can also use the online service to validate payloads against existing data model examples.

You can find all the details, explore the functions, and get the latest package from the Python Package Index (PyPI). The updated README file includes comprehensive documentation on all the new features.

We are committed to making data interoperability easier for everyone. These updates are a direct result of community feedback and the hard work of our contributors. A huge thank you to everyone who has helped make this possible!

In the data-models repository you can access to the first version to use smart data models as a service. Thanks to the works for the Cyclops project.

The files available create a wrap up around pysmartdatamodels package and also add one service for the online validation of NGSI-LD payloads.

Here is the readme contents to have an explanation of this first version

This project consists of two main components:

pysdm_api3.py) that provides access to Smart Data Models functionalitydemo_script2.py) that demonstrates the API endpointspysdm_api3.py)A RESTful API that interfaces with the pysmartdatamodels library to provide access to Smart Data Models functionality.

| Endpoint | Method | Description |

|---|---|---|

/validate-url |

GET | Validate a JSON payload from a URL against Smart Data Models |

/subjects |

GET | List all available subjects |

/datamodels/{subject_name} |

GET | List data models for a subject |

/datamodels/{subject_name}/{datamodel_name}/attributes |

GET | Get attributes of a data model |

/datamodels/{subject_name}/{datamodel_name}/example |

GET | Get an example payload of a data model |

/search/datamodels/{name_pattern}/{likelihood} |

GET | Search for data models by approximate name |

/datamodels/exact-match/{datamodel_name} |

GET | Find a data model by exact name |

/subjects/exact-match/{subject_name} |

GET | Check if a subject exists by exact name |

/datamodels/{datamodel_name}/contexts |

GET | Get @context(s) for a data model name |

The /validate-url endpoint performs comprehensive validation:

demo_script2.py)A simple interactive script that demonstrates the API endpoints by opening a series of pre-configured URLs in your default web browser.

my_web_urls listpython demo_script2.pyThe demo includes examples of:

pip install fastapi uvicorn httpx pydantic jsonschema

python pysdm_api3.py

python demo_script2.py

Edit the my_web_urls list in demo_script2.py to change which endpoints are demonstrated.

Apache 2.0