We are thrilled to announce a significant new release of our Python package, pysmartdatamodels, designed to empower developers and streamline the contribution process for our community.

This update is packed with new data models and, most notably, powerful new validation and testing services.

What’s New in This Version?

An Expanded Library of Data Models

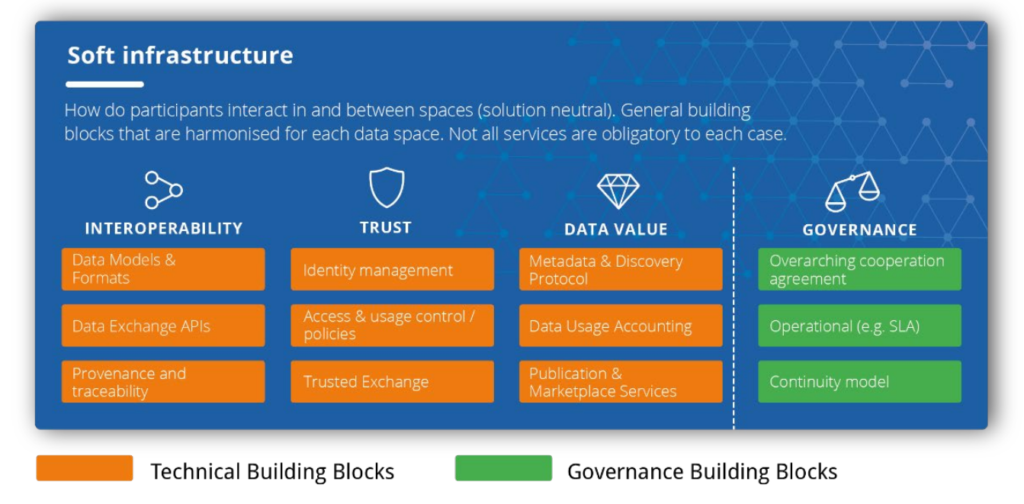

The heart of the Smart Data Models initiative is our comprehensive library of open-licensed data models. With this new release, we have expanded it further, adding new models across our thirteen domains, from Smart Cities to Smart Energy. This package continues to provide powerful functions to integrate more than 1,000 standardized data models into your projects, digital twins, and data spaces.

New! Automated Testing for Contributors

To improve the quality and speed of contributions, we are excited to launch a brand new service for our contributors. You can now automatically test your data models before submitting them. This automated validation ensures that new models comply with our standards, making the review and integration process smoother for everyone.

For those who want to integrate this validation into their own workflows, the source code for the testing tool is also available.

Online Validation for Examples

In addition to the new contributor tool, you can also use the online service to validate payloads against existing data model examples.

Get the Latest Version

You can find all the details, explore the functions, and get the latest package from the Python Package Index (PyPI). The updated README file includes comprehensive documentation on all the new features.

➡️ Get the package on PyPI

Our Commitment to Open and Interoperable Data

We are committed to making data interoperability easier for everyone. These updates are a direct result of community feedback and the hard work of our contributors. A huge thank you to everyone who has helped make this possible!